Benchmark snapshot

This page is a short reference for one public benchmark run comparing GoModel

and LiteLLM on OpenAI-compatible traffic.

The full article contains the complete write-up, all charts, and the original

discussion:

GoModel vs LiteLLM Benchmark: Speed, Throughput, and Resource Usage.

This benchmark is a point-in-time snapshot published on March 5, 2026. Treat

it as data, not dogma. Gateway performance depends on workload, provider mix,

deployment setup, and tuning.

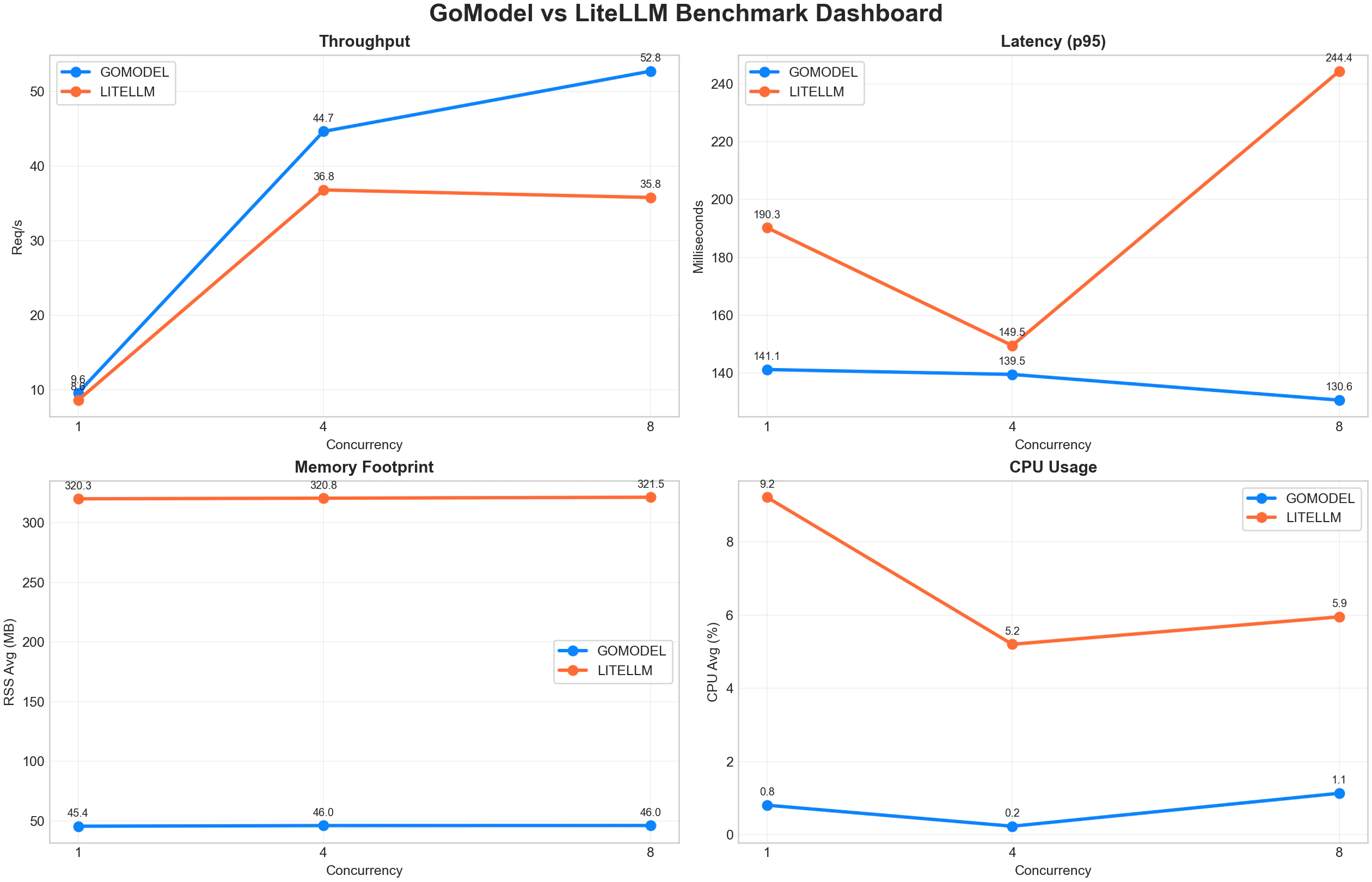

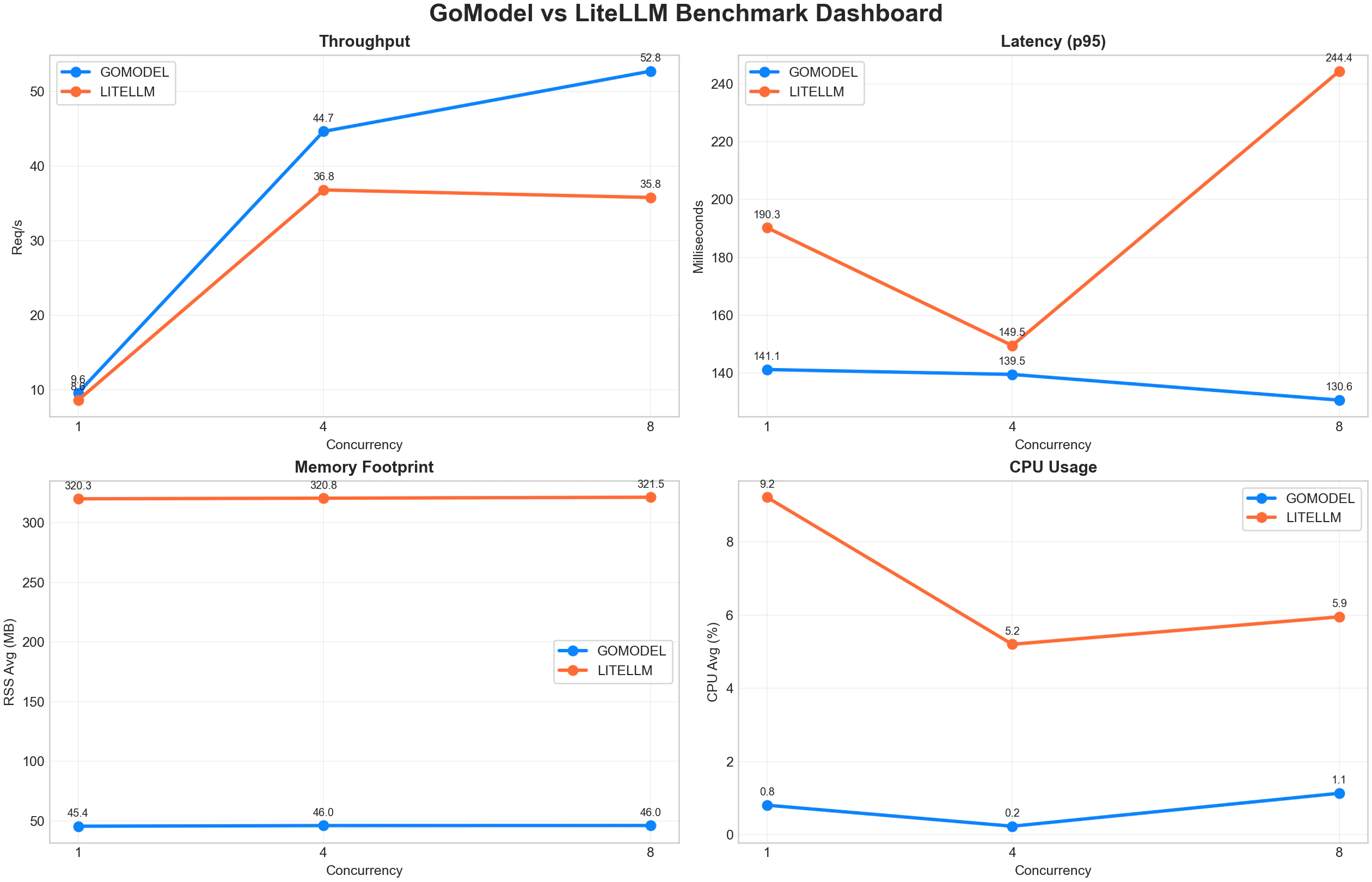

Visual snapshot

Chart source and full context:

Original benchmark post.

Chart source and full context:

Original benchmark post.

At a glance

In this benchmark run, GoModel came out ahead on the main operational signals

most teams care about:

- Added latency

- Throughput under concurrency

- CPU overhead

- Memory overhead

Test shape

The comparison used a simple like-for-like setup:

- OpenAI-compatible

/v1/chat/completions

- The same prompt and request shape on both sides

- Concurrency levels of

1, 4, and 8

- A focus on clean runs with

0% errors

- Metrics including req/s, latency percentiles, CPU usage, and RSS memory

This docs page keeps only the primary comparison matrix from the blog post.

Reference table

| Gateway | Concurrency | Success | Error % | Req/s | p50 ms | p95 ms | p99 ms | CPU avg % | RSS avg MB |

|---|

| GoModel | 1 | 12/12 | 0.00 | 9.61 | 86.4 | 141.1 | 144.4 | 0.81 | 45.4 |

| GoModel | 4 | 12/12 | 0.00 | 44.66 | 56.1 | 139.5 | 139.5 | 0.23 | 46.0 |

| GoModel | 8 | 12/12 | 0.00 | 52.75 | 98.4 | 130.6 | 131.1 | 1.13 | 46.0 |

| LiteLLM | 1 | 12/12 | 0.00 | 8.64 | 96.2 | 190.3 | 213.9 | 9.21 | 320.3 |

| LiteLLM | 4 | 12/12 | 0.00 | 36.82 | 104.7 | 149.5 | 149.5 | 5.20 | 320.8 |

| LiteLLM | 8 | 12/12 | 0.00 | 35.81 | 188.7 | 244.4 | 244.9 | 5.95 | 321.5 |

Key readouts

Some useful reads from that March 5, 2026 run:

- Lower p95 latency at every tested concurrency level.

- Higher throughput across the benchmark matrix.

45-46 MB RSS, while LiteLLM stayed near 320-321 MB.- Less CPU in these runs.

At the highest tested concurrency, GoModel reached 52.75 req/s versus

LiteLLM at 35.81 req/s.

Reproduce it yourself

All the tooling used in the published benchmark is available in this repository.

Prerequisites

- Go 1.26.3+

- Python 3.10+ with

matplotlib and numpy

jq, curl- A Groq API key (or any OpenAI-compatible provider — adjust the script)

litellm[proxy] (pip install "litellm[proxy]")

Scripts

The benchmark suite lives in docs/about/benchmark-tools/:

| File | Purpose |

|---|

compare.sh | Builds GoModel, starts both gateways, runs the full benchmark matrix, and writes a REPORT.md |

bench_main.go | Source for the bench CLI that sends requests and collects latency + process metrics |

plot_benchmark_charts.py | Generates per-metric charts and a combined dashboard from the JSON results |

Quick start

# 1. Clone GoModel and set up your .env with GROQ_API_KEY

git clone https://github.com/ENTERPILOT/GoModel.git

cd gomodel

echo "GROQ_API_KEY=gsk_..." > .env

# 2. Run the full comparison (builds GoModel, starts LiteLLM, benchmarks both)

bash docs/about/benchmark-tools/compare.sh

# 3. Generate charts from the latest result

pip install matplotlib numpy

python3 docs/about/benchmark-tools/plot_benchmark_charts.py benchmark-results/<timestamp>

benchmark-results/ containing

JSON result files, gateway logs, and a REPORT.md with the results table.

Tuning

You can override defaults via environment variables:

REQUESTS=100 CONCURRENCIES="1 4 8 16" MAX_TOKENS=16 bash docs/about/benchmark-tools/compare.sh

compare.sh for the full list of knobs.

Why this page is short

This page is intentionally shorter and more operational than the blog version.

It exists so docs readers can see the benchmark result quickly without reading a

full article inside the product docs. If you want the full narrative, more

charts, and the original context, use the source post.

No single benchmark settles the question for every environment. If you are

evaluating gateways seriously, reproduce the test against your own traffic and

infrastructure.